Improved performance at a price point is expected, otherwise why bother buying the newer cards? The problem is the value improvements per generation really aren't what they used to be; what we would have historically called mid-range GPUs (based on e.g. die size) are being sold as increasingly-expensive high-end cards, with the previous high-end cards being sold as some new e-peen tier for extortionate amounts of money. Some use the slowing of Moore's law as a complete excuse for this, it isn't. While the $/transistor seemingly isn't dropping as fast as it used to due to the use of things like multiple patterning, increasing mask costs, etc, it doesn't come close to justifying the jump in price we've seen in some market segments. This is apparent from the increasing profit margins published - in one breath, Nvidia is claiming that fab costs are squeezing them, and in another they're boasting increased margins - can't have it both ways!

Nvidia just keep pushing prices higher and higher every generation to see what they can get away with, so from a consumer perspective it's perfectly reasonable to be critical of it IMHO. It's obvious the effect competition has on the market when you look at prices of cards from both companies within AMD's catchment. Meanwhile the Titan has even lost the excuse of being a Geforce-Pro mid-ground - the features that defined the original Titan cards have evaporated from all of the recent cards, and it's gotten even more expensive!

Part of the reason we're still seeing good performance gains in GPUs compared to CPUs is down to the nature of the tasks they process - graphics is an 'embarrassingly parallel' task with extremely good parallel scaling, so there is still low-hanging fruit in the form of adding more cores to the GPU die and you'll get a very good return on that. That's not to take away from the huge amount of engineering that goes into GPU design, even something as 'simple' as 'bolting on a few more cores' isn't straightforward as you then have to figure out clock and power distribution across a larger die, ensure the processor fabric and caches will not become bottlenecks, etc. And I think it was a blog post from a PowerVR graphics engineer that said there are still lots of new tricks being discovered for graphics processing to e.g. make better use of the resources you do have.

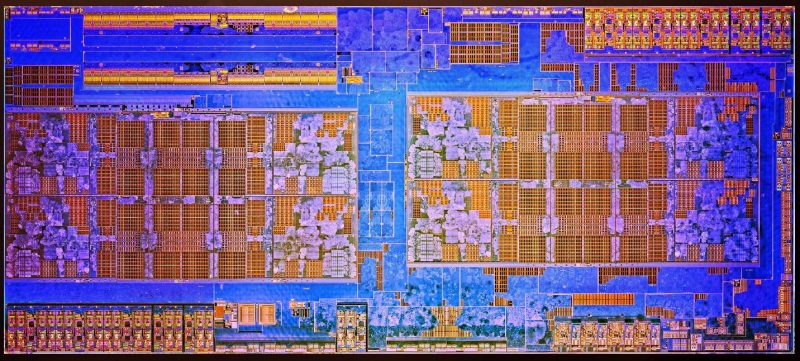

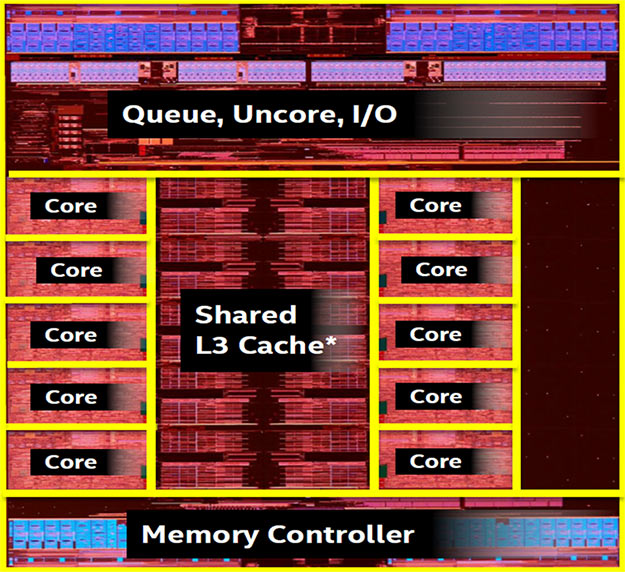

With CPUs, it's harder to make good use of that extra transistor budget. You only have to look at the size of CPUs compared to GPUs - Intel's desktop Skylake processors are barely over 120mm2 vs upwards of 500mm2 for large GPUs - and most of that CPU isn't CPU space at all, it's things like integrated graphics, Northbridge and caches. I estimated Skylake's individual core size without L3 cache to be something like 8mm2, and Skylake is a *big* CPU core.

LinkBack URL

LinkBack URL About LinkBacks

About LinkBacks

Reply With Quote

Reply With Quote